Have you used a chatbot that understood your questions at the start, but then you became frustrated because it didn’t respond properly?

This is the gap between expectations and experience, which disrupts conversational flows.

More teams today are building chatbots and conversational interfaces to guide users through tasks from onboarding to support. But testing these flows isn’t like testing a static webpage. Users don’t click predictable buttons. They type freely, interpret prompts differently, and expect the system to adapt in real time.

This creates a new kind of challenge.

This post examines why conversational flows fail in real-world scenarios, how UX teams should test them effectively, and what signals matter before launch.

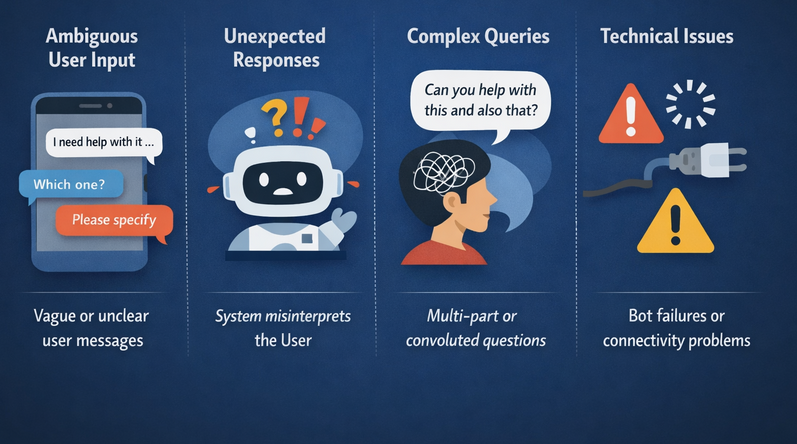

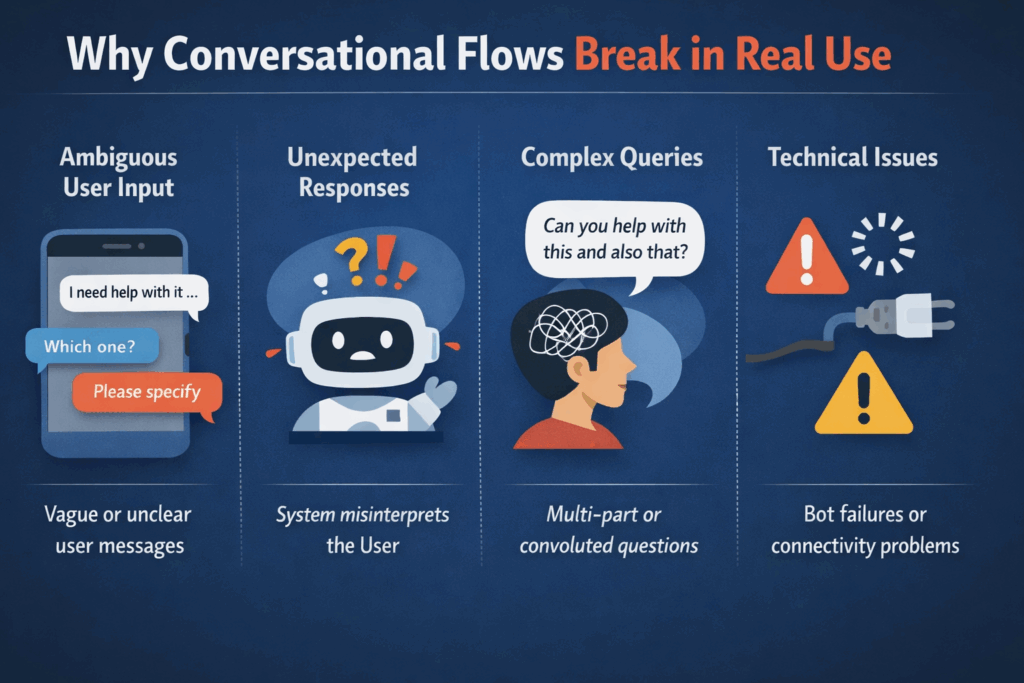

Why Conversational Flows Break in Real Use

Most conversational flows look polished in demos. They follow a clean and linear path. Everything works as expected.

But real users rarely behave that way.

Users don’t follow expected paths

People don’t think in predefined flows. They skip steps, ask unrelated questions, or change their minds halfway through.

Consider a user who is trying to cancel a subscription. Instead of following the “Cancel” path, they might type:

“I don’t want to be charged anymore.”

If your system expects a keyword like “cancel” to cancel the subscription, it will fail immediately because it received something out of context.

Prompts are easy to misunderstand

Do you know that even simple questions can confuse users?

Consider the following examples:

- “Select your plan type” vs

- “Which plan are you currently using?”

One is system-focused. The other is user-focused. That small difference can change how people respond to the question.

Users drop off quietly

Unlike forms that show errors, conversational interfaces don’t always reveal where things go wrong. Users just stop responding.

No feedback. No complaints. Just silence.

Context gets lost & hurts website findability

If the system forgets what happened earlier in a conversation, the experience feels broken.

For instance:

User: “I want to upgrade my plan.”

Bot: “Which plan are you currently on?”

User answers

Bot: “What would you like to do today?”

That reset kills trust instantly. This is why testing conversational flows should focus on real behavior, not just whether the system technically works.

What UX Teams Should Look For During Testing

When testing conversational flows, asking “Did the user complete the task?” is not enough. You need to go in more depth.

Where users drop off

Look for the exact moment users abandon a conversation. Is it after a confusing prompt? Is it when the system asks for too much information? Drop-offs tell you where friction lives.

Where users hesitate

Hesitation is one of the most underrated signals in UX testing. If users pause, retype responses, or ask clarifying questions, something isn’t clear.

This is important in systems built using conversational AI. These systems rely on NLP and machine learning to interpret and respond to user inputs. While they often produce fluent and human-like responses, this can sometimes mask underlying uncertainty in how intent is interpreted.

In practice, these systems infer intent based on patterns and probabilities, which means they can occasionally misinterpret context or ambiguity. When users rephrase or hesitate, it often signals a gap between what the system inferred and what the user actually meant.

This is where well-designed NLP solutions play a critical role by improving intent recognition, handling ambiguity more effectively, and continuously learning from user interactions.

Where the flow breaks

Watch for:

- Loops (“I already answered this”)

- Dead ends (“I don’t understand”)

- Incorrect responses

These are structural issues rather than just content problems.

How the system handles unexpected input

A/B testing reveals how the system handles unexpected input; users will always surprise you.

They might:

- Type incomplete sentences

- Use slangs

- Ask multiple questions at once

Does your system recover gracefully, or does it fail?

How easy it is to recover

Mistakes are inevitable. Recovery is what defines a good experience.

Can users:

- Correct themselves easily?

- Go back a step?

- Restart without frustration?

These signals reveal what’s happening inside the flow instead of just what was designed in the website.

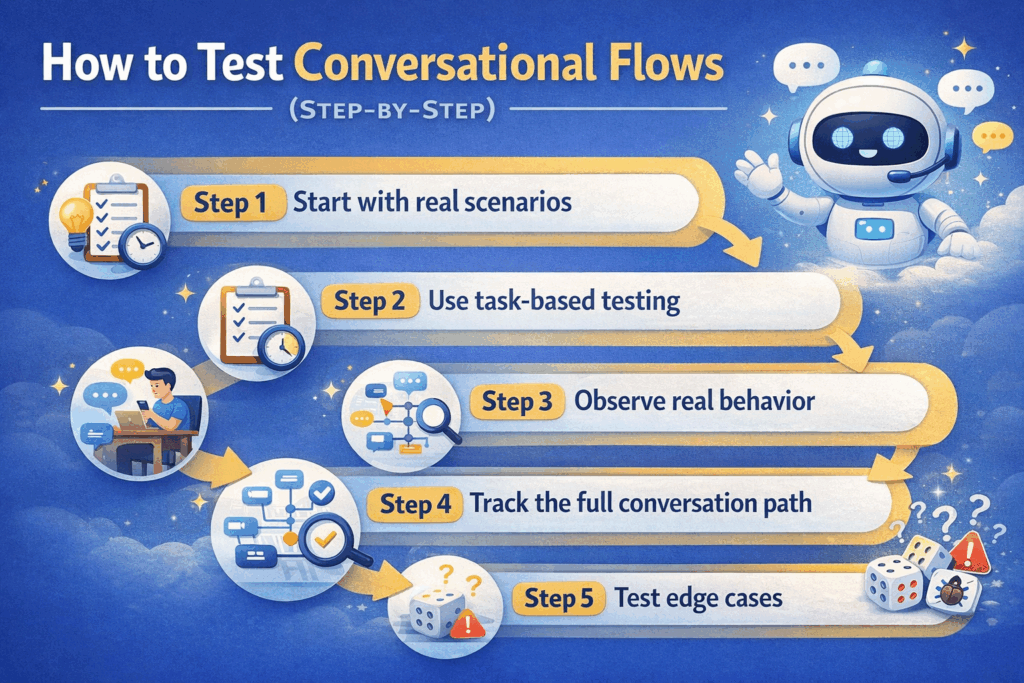

How to Test Conversational Flows (Step-by-Step)

Testing conversational UX doesn’t need to be complicated. But it should be intentional.

Step 1: Start with real scenarios

Move beyond prototype testing and avoid testing “perfect” flows.

Instead, use real user goals:

- “I want to cancel my subscription.”

- “I need help choosing a product.”

- “I was charged twice.”

These scenarios reflect intent, not ideal behavior.

Interestingly, many teams rely on internal scripts that would easily pass an AI detector, but fail when exposed to real human variability. That’s because users don’t behave like structured inputs; they behave unpredictably.

Step 2: Use task-based testing

Give users a goal instead of instructions.

Rather than saying “Click here, then type this…”

Say “Cancel your subscription using this chatbot.”

Then observe. Let them explore naturally.

Step 3: Use user testing to observe real behavior

From this step, we can determine the real insights.

Watch for:

- Confusion

- Hesitation

- Unexpected responses

Don’t interrupt. Don’t guide. If they struggle, that’s the point.

Step 4: Track the full conversation path

Don’t just look at the outcome. Analyze the journey:

- Where do users deviate?

- Where do they repeat themselves?

- Where do they give up?

By mapping these paths, you can often understand the patterns you didn’t expect.

Step 5: Test edge cases

Include mobile testing, when most failures don’t happen in ideal scenarios.

They happen when users:

- Give vague answers

- Skip steps

- Change intent mid-conversation

For example, “Actually, never mind, can you show me cheaper options instead?”

This is where many systems break.

Reports like the IVR in 2026 report highlight how traditional systems struggle when customer queries don’t fit predefined paths, especially as users increasingly bring complex or multi-part requests into a single interaction.

If your system works only in perfect conditions, it’s not ready.

Common Issues Found in Conversational UX

From testing and industry observations, a few patterns show up consistently.

Rigid flows

Some systems only work if users follow a fixed path. But real conversations are flexible. Your system should be too.

Unclear language

If users don’t understand the prompt, everything else fails. Clarity beats cleverness every time.

No fallback responses

When the system doesn’t recognize input, what happens?

A good fallback:

- Acknowledges confusion

- Offers options

- Guides users forward

A bad fallback:

“I didn’t understand that.”

Too many steps

Long conversations increase cognitive load. If a task takes 10+ steps, users will drop off.

Ask yourself: Can this be simplified?

Lack of feedback

Users need reassurance.

Simple cues like:

- “Got it”

- “You’re almost done.”

- “Here’s what happens next.”

…can significantly improve completion rates.

Fix these issues, and it will improve usability, besides directly impacting business outcomes.

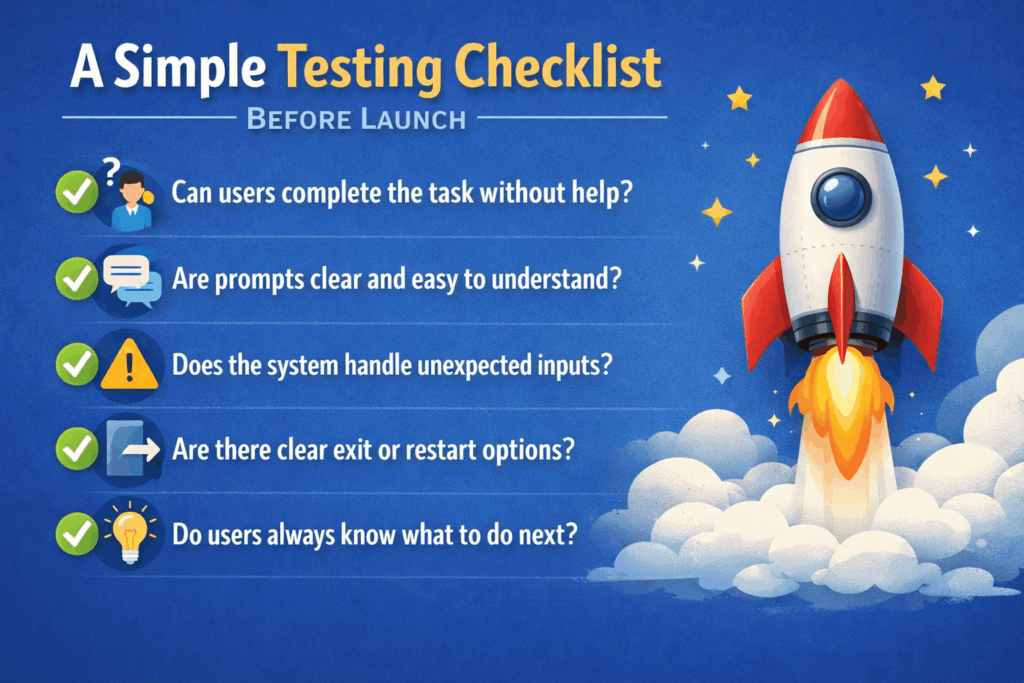

A Simple Testing Checklist Before Launch

Before launching any conversational flow, run through this quick checklist:

- Can users complete the task without help?

- Are prompts clear and easy to understand?

- Does the system handle unexpected inputs?

- Are there clear exit or restart options?

- Do users always know what to do next?

This may not be exhaustive, but it catches most critical issues early – a dedicated chatbot testing guide can help you go deeper.

How to Measure If Your Conversational Flow Works

Testing gives you insights. Metrics help you scale them.

Task completion rate

Are users achieving their goals? If not, where are they failing?

Drop-off rate

Track where users leave. Patterns here are incredibly valuable.

Time to complete

Is the flow efficient, or does it drag on? Longer doesn’t always mean better.

Error rate

How often does the system fail to respond correctly? Even small errors add up quickly.

User confidence

This is harder to measure, but critical.

Do users feel:

- In control?

- Understood?

- Comfortable continuing?

The best insights come from combining metrics with real user observation.

How This Helps UX Teams Improve Results

When UX teams test conversational flows properly, the impact is immediate.

They can:

- Reduce drop-offs by identifying friction points early

- Improve clarity by refining prompts based on real behavior

- Handle unexpected inputs more effectively

- Create smoother and more natural user journeys

Teams building chatbot experiences on a modern website builder should test conversational flows across real pages, devices, and user journeys.

Instead of guessing what works, teams rely on actual user interactions.

And that’s the difference between a chatbot that functions and one that feels intuitive.

Final Thoughts

Conversational flows don’t fail in controlled environments. They fail when real users:

- Respond differently than expected

- Misinterpret prompts

- Lose patience halfway through

That’s why testing should aim to uncover where it breaks.

If you’re building or refining a conversational experience, start by observing real users. Watch where they struggle, listen to how they respond, and refine the system accordingly.

Because in the end, great conversational UX is about adapting to imperfect human behaviors.

Want to take this further?

If you want to improve your conversational flows, you need to test them the way users experience them. Explore Loop11. Here you can test usability using both AI browser agents and real users. This can help you find where conversations break, where users hesitate, and what needs fixing.

- Testing Conversational Flows: What UX Teams Should Look For - April 27, 2026

- Everything You Need to Know About Benchmarking UX in SaaS Products - September 30, 2025

- Surveys vs. Usability Tests: Choosing the Right UX Research Tool - February 18, 2025

![]() Give feedback about this article

Give feedback about this article

Were sorry to hear about that, give us a chance to improve.

Error: Contact form not found.