The slide deck hits the screen. The usability metrics look pristine. You see a 90% task completion rate and a highlight reel of successful clicks. The stakeholders exhale. The feature is marked “validated,” and the engineering team gets the green light to ship.

Then you launch. And the support tickets flood in.

The feature that “crushed it” in testing is failing in the wild.

How does a feature pass the lab test so easily but fail the reality test?

The problem isn’t your data. It is your translation. Too many teams treat usability testing like a binary exam. They look for the “success” and miss the red flags hiding in plain sight. They confuse ability with desirability. They mistake politeness for satisfaction.

You are likely sitting on a goldmine of insights. You are just throwing them away because they don’t fit the success narrative. This guide rips apart the three most common interpretation traps in UX research and shows you how to stop looking for validation and start seeing the truth.

Mistake 1: “Nice User” Bias (Overvaluing Verbal Feedback)

Human beings are socially conditioned to be agreeable. Unless you are specifically recruiting from a particularly grumpy demographic, your participants naturally want you to feel good about your work. They know you are watching, and that knowledge invariably filters their feedback.

This results in the “nice user” bias.

We see this constantly in session recordings. A participant might struggle for three minutes to locate the “Checkout” button. They click the wrong link twice, open the footer, scroll back up, and finally stumble upon the cart icon. Yet, the moment the task is complete, they smile at the camera and say, “That was pretty easy. I really like the blue.”

Relying on the audio transcript makes this session look like a win. You log the “easy” comment and move on.

The Reality Check:

Verbal feedback is often a trailing indicator of social pressure, not a leading indicator of usability. What users say is an opinion; what they do is a fact.

In many cases, users blame themselves for UI failures. When they can’t find a menu item, they don’t think, “This information architecture is flawed.” They think, “I’m bad with computers.” To cover this embarrassment, they overcompensate with praise once they finally complete the task.

What to do instead:

When reviewing your session recordings, watch the first pass with the sound off. This strips away the social layer and forces you to look strictly at the biomechanics of the interaction.

- Watch the mouse: Confident, straight lines indicate intent. Hovering, erratic circles indicate disorientation.

- Watch the scroll: Rapid scrolling past a Call-to-Action (CTA) means the element is effectively invisible, regardless of how much the user claims to “love the layout.”

- Watch the clicks: Repeated clicks on non-interactive elements (“rage clicks”) are the truest form of feedback.

Mistake 2: “Perfect Scenario” Blind Spot

In a standard usability test, we hand participants a script. We tell them: “Imagine you want to buy a pair of red sneakers. Go to the site, find the sneakers, and add them to your cart.”

Most users eventually succeed at this. They have a singular goal, they are being incentivized to finish, and – crucially – they have zero distractions. The team looks at the high completion rates and assumes the design is flawless.

But this interpretation ignores the chaos of the real world.

In reality, your user is not just “buying sneakers.” They are checking your return policy, comparing the price to a tab open on Amazon, texting a friend for an opinion, and getting distracted by a Slack notification. When teams focus strictly on Task Completion Rate, they miss the Friction Coefficient. They ignore the messy, non-linear journey the user actually took to get to the finish line.

The Reality Check:

A completed task does not equal a successful design. If a user tries to right-click an image to zoom in, fails, and then finds the official zoom button, they have technically “succeeded.”

But the design failed their instinct. If you treat these deviations as “user errors” or “out of scope,” you are throwing away your most critical data points.

What to do instead:

Analyze the “recoveries,” not just the successes. A session where a user makes a mistake and seamlessly corrects it is often more valuable than a session where they execute perfectly.

You need to hunt for desire paths.

In urban planning, a desire path is the dirt trail pedestrians wear into the grass because the paved sidewalk takes a longer route. In UX, these are the “wrong” clicks.

If three out of five users try to click a non-clickable header, that is not a mistake but a desire path. You should pave it. Instead of forcing them back to your happy path, ask why their instinct led them elsewhere. The “wrong” clicks often reveal the feature you should have built.

This pattern appears frequently across different interface types. In website builder tools, for example, users often attempt to directly drag and resize elements expecting immediate manipulation, when the actual workflow requires accessing a properties panel first. These instinctive actions, though technically ‘incorrect,’ signal where the interaction model should evolve.

Mistake 3: Dismissing the “Outlier”

“Only one user struggled with that, so it’s probably just a fluke.”

This sentiment represents a dangerous misunderstanding of UX research.

It usually comes from confusing quantitative data with qualitative insights. You need thousands of data points to prove a trend in an A/B test. A single click means very little in that context.

Usability testing operates differently. You are looking for causes rather than just counting results. When you test with a small group of five participants, one user hitting a critical blocker represents a significant signal. That single user stands in for 20% of your test group. If you project that failure rate across 10,000 active users, that “one outlier” becomes 2,000 frustrated customers.

Teams often dismiss these edge cases because fixing them is difficult. It is easier to label the user as “confused” than to refactor the code.

The Reality Check:

You cannot dismiss a user for being confused when your goal is to build a clear interface. If a user misunderstands a label, the label is unclear. If they miss a button, the contrast is likely too low.

In a qualitative test, a single instance of struggle is a valid finding if the design caused the problem.

What to do instead:

Assume that every small struggle you see in a controlled test will magnify in the real world. A user sitting in a quiet room might eventually figure out a confusing menu. A user on a noisy train will simply give up.

You should categorize these issues by severity rather than frequency.

Distinguish between a friction point and a cliff.

- Friction Point: The user hesitates but eventually proceeds. (Priority: Medium).

- Cliff: The user gets stuck and cannot proceed without help. (Priority: Critical).

If even one user falls off a cliff, you have a critical issue. The successful path of the other four users does not negate the failure of the design for the fifth. You cannot ship a feature that breaks for a portion of your audience just because others survived.

Hidden Signals Checklist

You know to ignore the verbal praise. You know to watch for recoveries. But what specific behaviors should you be hunting for in your Loop11 session recordings?

Look for these three “micro-signals.” They often reveal more about the user experience than the completion rate ever will.

1. The Navigation “U-Turn”

Watch the user’s mouse as they navigate your menu or footer. A U-turn happens when a user clicks into a category, hovers for less than two seconds, and immediately hits the back button or clicks away without scrolling.

This is a specific type of failure signal. It means your information architecture (IA) made a promise that the content didn’t keep. The link text created a specific expectation that the destination page failed to satisfy. When you observe this rapid retreat, the solution is rarely a complex redesign. You simply need to rewrite the navigation label to accurately describe the content waiting on the other side.

2. The Safety Check (Idle Behavior)

The most valuable insights often happen between the tasks. Pay close attention to what the user does when they think the test is paused, or while they are waiting for a page to load.

- Do they highlight the price? This usually indicates they are doing a mental calculation or preparing to copy-paste it for comparison.

- Do they hover over the URL bar? This is a trust signal. They are looking for the padlock icon or verifying the domain.

- Do they open a new tab? Even if they close it immediately, this shows a reflex to compare your product against a competitor.

These silent actions reveal the user’s anxiety levels. A user who feels safe doesn’t check the lock on the door.

3. The “Time-to-First-Click” Lag

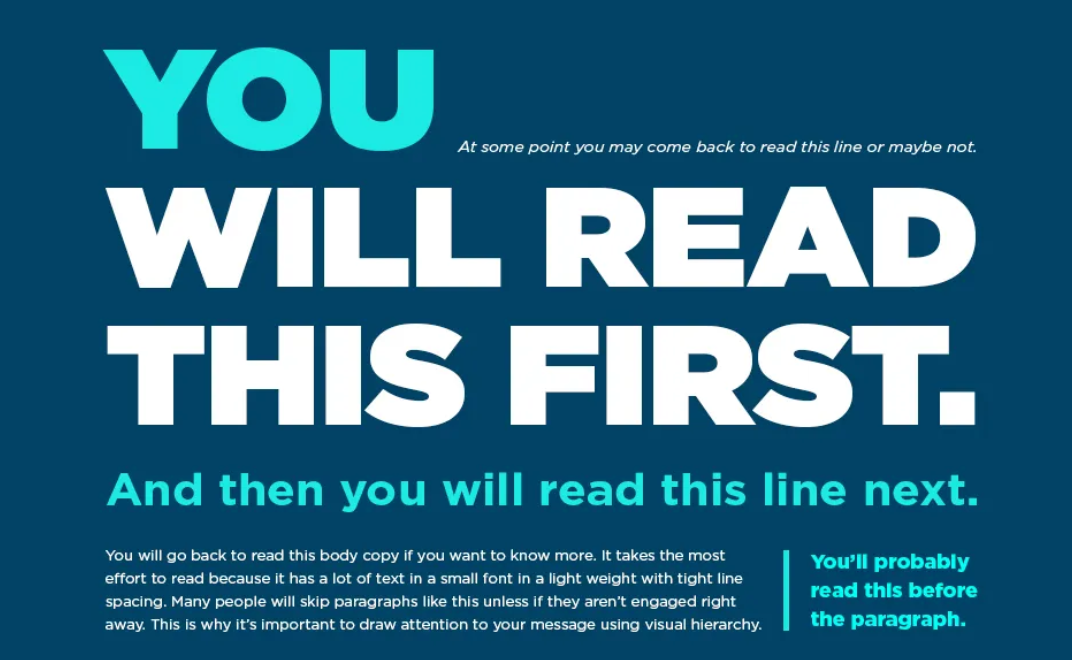

In Loop11, you can measure how long it takes for a user to interact with the interface after the prompt appears. A long “time-to-first-click” is a proxy for cognitive load.

If a user stares at the screen for eight seconds before moving their mouse, they are reading and processing. If they stare for fifteen seconds, they are lost. They are scanning the navigation and finding no “scent” of their goal. Even if they eventually click the right button, that fifteen-second lag is a design failure. It implies that your primary call-to-action is not distinct enough to catch the eye immediately.

Conclusion: Trust the Struggle

Usability testing is primarily an exercise in empathy. The goal is to find out where your assumptions are wrong as quickly as possible.

When you analyze your next Loop11 test, look past the high completion rates. Ignore the polite compliments. Zoom in on the hesitation. Watch the back-button clicks. Listen to the heavy sighs. That friction is where the real improvement happens.

Your users are telling you the truth through their behavior. You just have to be brave enough to believe them.

- Why Technical Context Matters in UX Research (And How to Capture It Properly) - May 12, 2026

- How UX Teams Misread Usability Test Results (And What to Do Instead) - March 23, 2026

- How to validate UX decisions before development - February 2, 2026

![]() Give feedback about this article

Give feedback about this article

Were sorry to hear about that, give us a chance to improve.

Error: Contact form not found.